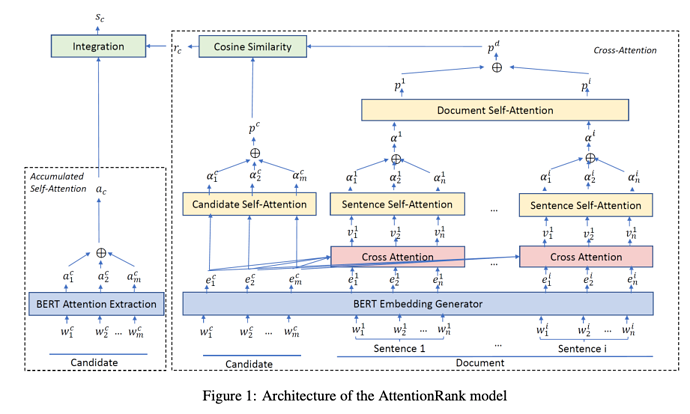

This paper proposes a fully unsupervised key-phrase extraction method using attention weights generated by a BERT model. They first use PoS to extract noun phrases as candidates. Then every sentence is embedded and its attention is obtained by a BERT model. Candidate embeddings are then weight-averaged using attentions within itself. Document embedding is calculated per each candidate using cross attention between each candidate and each each sentence. These two embeddings are dot-producted to produce similarity for ranking the candidate.

Comments

- Overall method is solid and the results are promising.

- Though Fig 1 shows a candidate and a document are fed into BERT together, they might not be embedded that way. As Eq 4-8 state that these cross attention are not really produced by the BERT attention heads.

Rating

- 5: Transformative: This paper is likely to change our field. It should be considered for a best paper award.

- 4.5: Exciting: It changed my thinking on this topic. I would fight for it to be accepted.

- 4: Strong: I learned a lot from it. I would like to see it accepted.

- 3.5: Leaning positive: It can be accepted more or less in its current form. However, the work it describes is not particularly exciting and/or inspiring, so it will not be a big loss if people don’t see it in this conference.

- 3: Ambivalent: It has merits (e.g., it reports state-of-the-art results, the idea is nice), but there are key weaknesses (e.g., I didn’t learn much from it, evaluation is not convincing, it describes incremental work). I believe it can significantly benefit from another round of revision, but I won’t object to accepting it if my co-reviewers are willing to champion it.

- 2.5: Leaning negative: I am leaning towards rejection, but I can be persuaded if my co-reviewers think otherwise.

- 2: Mediocre: I would rather not see it in the conference.

- 1.5: Weak: I am pretty confident that it should be rejected.

- 1: Poor: I would fight to have it rejected.

0 voters