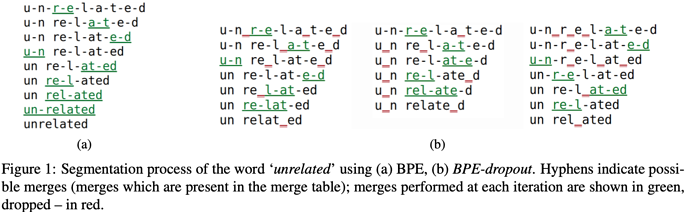

BPE tokenization is a de-facto technique to reduce vocabulary size by splitting a word into subwords following a merging table, which is trained by merging frequent subwords to a larger one till the desired vocabulary size is reached. This paper introduces a very simple method to improve generalization via randomly drop some rules in the merging table.

As shown in Figure 1, the word “unrelated” could have several different segmentation during training while only the original segmentation in subfigure 1 is used during inference. Their method shows statistical significance on several MT datasets.

Comments

Personally I like this kind of “simple” methods since performance boost is obtained for free which greatly benefits production systems. The only regret is that these authors didn’t experiment their dropout on any pretrained Transformers.

- 5: Transformative: This paper is likely to change our field. It should be considered for a best paper award.

- 4.5: Exciting: It changed my thinking on this topic. I would fight for it to be accepted.

- 4: Strong: I learned a lot from it. I would like to see it accepted.

- 3.5: Leaning positive: It can be accepted more or less in its current form. However, the work it describes is not particularly exciting and/or inspiring, so it will not be a big loss if people don’t see it in this conference.

- 3: Ambivalent: It has merits (e.g., it reports state-of-the-art results, the idea is nice), but there are key weaknesses (e.g., I didn’t learn much from it, evaluation is not convincing, it describes incremental work). I believe it can significantly benefit from another round of revision, but I won’t object to accepting it if my co-reviewers are willing to champion it.

- 2.5: Leaning negative: I am leaning towards rejection, but I can be persuaded if my co-reviewers think otherwise.

- 2: Mediocre: I would rather not see it in the conference.

- 1.5: Weak: I am pretty confident that it should be rejected.

- 1: Poor: I would fight to have it rejected.