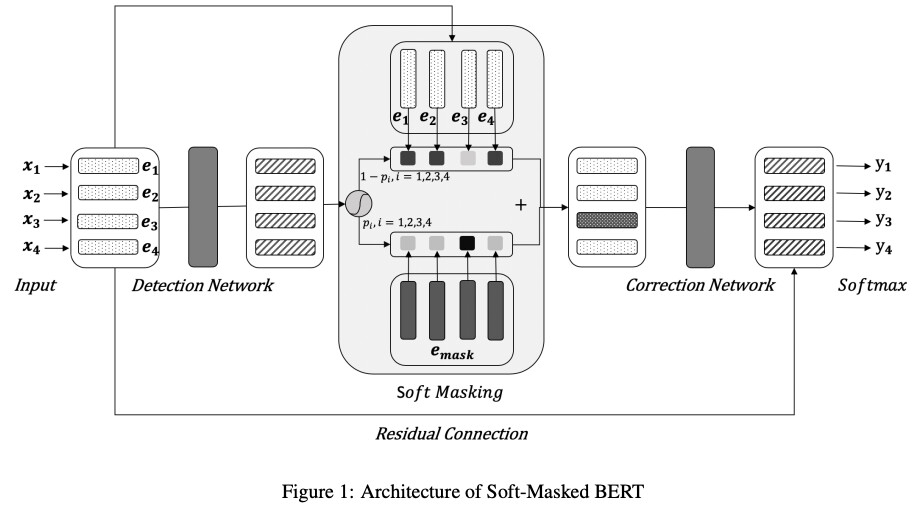

Chinese spelling error correction (CSC) is a challenging task due to the requirement of high level language understanding ability. This paper proposes the Soft-Masked BERT consisting of an error detection network and a correction network. The error detection network takes input a sequence of characters then predicts a probability of being misspelled for each character. This probability is used as an interpolation factor between the character embedding and [MASK] embedding. Then the fused embeddings are fed into the correction network and the corrections are predicted.

Comments

- The idea is very intuitive and it indeed outperforms the BERT baseline.

- However, misspelled characters often provide very strong clues of the true characters. While you mask them out, you lose their features.

- It’s not sounding if there are multiple errors and they are predicted independently.

- In Sec 3.2, what are the BERTPretrain and BERT-Finetune? I assue BERTPretrain won’t be finetuned but why do you need such a weak baseline?

- I’d personally rather read a short paper presenting the same content with more compact writing.

Rating

- 5: Transformative: This paper is likely to change our field. It should be considered for a best paper award.

- 4.5: Exciting: It changed my thinking on this topic. I would fight for it to be accepted.

- 4: Strong: I learned a lot from it. I would like to see it accepted.

- 3.5: Leaning positive: It can be accepted more or less in its current form. However, the work it describes is not particularly exciting and/or inspiring, so it will not be a big loss if people don’t see it in this conference.

- 3: Ambivalent: It has merits (e.g., it reports state-of-the-art results, the idea is nice), but there are key weaknesses (e.g., I didn’t learn much from it, evaluation is not convincing, it describes incremental work). I believe it can significantly benefit from another round of revision, but I won’t object to accepting it if my co-reviewers are willing to champion it.

- 2.5: Leaning negative: I am leaning towards rejection, but I can be persuaded if my co-reviewers think otherwise.

- 2: Mediocre: I would rather not see it in the conference.

- 1.5: Weak: I am pretty confident that it should be rejected.

- 1: Poor: I would fight to have it rejected.

0 投票者