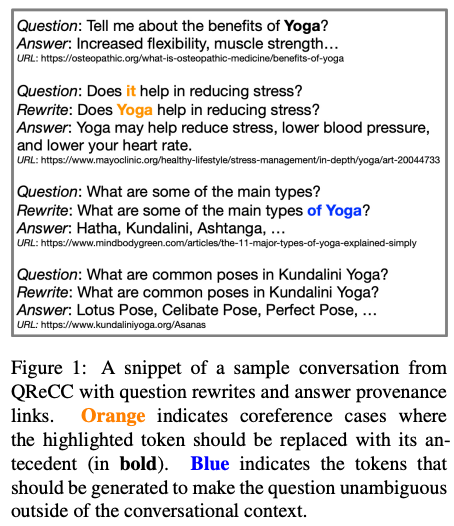

Question rewriting is the task of rewriting a question from a conversation to remove its dependency on the context such that the question is self-contained. QR is often utilized as a bridge to connect open-domain QA with conversational QA. Specifically, reading comprehension approaches in open-domain QA are augmented with a QR to handle conversational context. This resource paper presents a large scale QR dataset with 14K/80K conversations/QA pairs along with the procedure to create them.

Comments

- This paper also provides a brief introduction to recent development in conversational QA.

- Their experiments show a generative model outperforms SOTA coreference resolution models on the QR subtask to replace pronouns. It seems that seq2seq models are much more powerful than core NLP models. However, I need more time and more evidences to convince myself.

Rating

- 5: Transformative: This paper is likely to change our field. It should be considered for a best paper award.

- 4.5: Exciting: It changed my thinking on this topic. I would fight for it to be accepted.

- 4: Strong: I learned a lot from it. I would like to see it accepted.

- 3.5: Leaning positive: It can be accepted more or less in its current form. However, the work it describes is not particularly exciting and/or inspiring, so it will not be a big loss if people don’t see it in this conference.

- 3: Ambivalent: It has merits (e.g., it reports state-of-the-art results, the idea is nice), but there are key weaknesses (e.g., I didn’t learn much from it, evaluation is not convincing, it describes incremental work). I believe it can significantly benefit from another round of revision, but I won’t object to accepting it if my co-reviewers are willing to champion it.

- 2.5: Leaning negative: I am leaning towards rejection, but I can be persuaded if my co-reviewers think otherwise.

- 2: Mediocre: I would rather not see it in the conference.

- 1.5: Weak: I am pretty confident that it should be rejected.

- 1: Poor: I would fight to have it rejected.

0 投票者