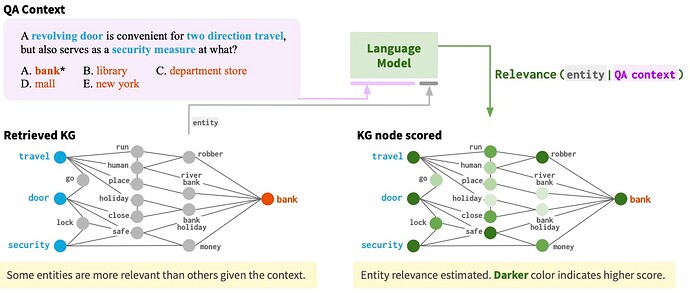

This paper combines language models with knowledge graphs to improve inference in QA. Since KG nodes are noisy, they concatenate each KG entity to the QA context to score its relevance and use the relevance score as part of the feature for attention computation in GAT. Their models performed comparable to existing models with similar size but worse than large models like T5.

Comments

- The relevance idea is smart but it must be very slow.

We first embed the relevance score of each node t by

\begin{align} \boldsymbol{\rho}_t & = {f}_\rho (\rho_t), \end{align}where {f}_{\rho}: \mathbb{R} \rightarrow \mathbb{R}^{D/2} is an MLP.

- How do you embed the relevance score since it is a scalar? You will get the same D/2 elements in your feature vector, is that good?

Rating

- 5: Transformative: This paper is likely to change our field. It should be considered for a best paper award.

- 4.5: Exciting: It changed my thinking on this topic. I would fight for it to be accepted.

- 4: Strong: I learned a lot from it. I would like to see it accepted.

- 3.5: Leaning positive: It can be accepted more or less in its current form. However, the work it describes is not particularly exciting and/or inspiring, so it will not be a big loss if people don’t see it in this conference.

- 3: Ambivalent: It has merits (e.g., it reports state-of-the-art results, the idea is nice), but there are key weaknesses (e.g., I didn’t learn much from it, evaluation is not convincing, it describes incremental work). I believe it can significantly benefit from another round of revision, but I won’t object to accepting it if my co-reviewers are willing to champion it.

- 2.5: Leaning negative: I am leaning towards rejection, but I can be persuaded if my co-reviewers think otherwise.

- 2: Mediocre: I would rather not see it in the conference.

- 1.5: Weak: I am pretty confident that it should be rejected.

- 1: Poor: I would fight to have it rejected.

0 投票者